Intelligent Driving Fusion Algorithm Research: sparse algorithms, temporal fusion and enhanced planning and control become the trend.

China Intelligent Driving Fusion Algorithm Research Report, 2024 released by ResearchInChina analyzes the status quo and trends of intelligent driving fusion algorithms (including perception, positioning, prediction, planning, decision, etc.), sorts out algorithm solutions and cases of chip vendors, OEMs, Tier1 & Tier2 suppliers and L4 algorithm providers, and summarizes the development trends of intelligent driving algorithms.

Since the period of eight months from Musk's live test drive of FSD V12 Beta in August 2023 to the 30-day free trial of FSD V12 Supervised in March 2024, advanced intelligent driving such as urban NOA has begun to become the arena of major OEMs, and there have been ever more application cases for end-to-end algorithms, BEV Transformer algorithms, and AI foundation model algorithms.

1. Sparse algorithms improve efficiency and reduce intelligent driving cost.

At present, most BEV algorithms are dense and consume considerable computing power and storage. The smoothness of more than 30 frames per second requires expensive computing resources such as NVIDIA A100. Even so, only 5 to 6 2MP cameras can be supported. For 8MP cameras, extremely expensive resources like multiple H100 GPUs are needed.

Our real world has sparse features. Sparsification helps sensors reduce noise and improve robustness. In addition, as distance increases, grids are bound to be sparse, and a dense network can only be maintained within about 50 meters. By reducing queries and feature interactions, sparse perception algorithms speed up calculations and lower storage requirements, greatly improve the computing efficiency and system performance of the perception model, shorten the system latency, expand the perception accuracy range, and ease the impact of vehicle speed.

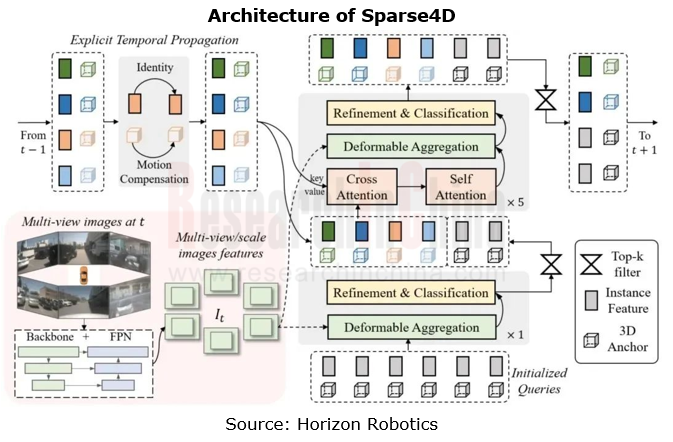

Therefore, the academia has shifted to sparse target-level algorithms rather than dense grid-based algorithms since 2021. With long-term efforts, sparse target-level algorithms can perform almost as well as dense grid-based algorithms. The industry also keeps iterating sparse algorithms. Recently, Horizon Robotics has open-sourced Sparse4D, its vision-only algorithm which ranks first on both nuScenes vision-only 3D detection and 3D tracking lists.?

Sparse4D is a series of algorithms moving towards long-time-sequence sparse 3D target detection, belonging to the scope of multi-view temporal fusion perception technology. Facing the industry development trend of sparse perception, Sparse4D builds a pure sparse fusion perception framework, which makes perception algorithms more efficient and accurate and simplifies perception systems. Compared with dense BEV algorithms, Sparse4D reduces the computational complexity, breaks the limit of computing power on the perception range, and outperforms dense BEV algorithms in perception effect and reasoning speed.

Another significant advantage of sparse algorithms is to cut down the cost of intelligent driving solutions by reducing dependence on sensors and consuming less computing power. For example, Megvii Technology mentioned that taking a range of measures, for example, optimizing the BEV algorithm, reducing computing power, removing HD maps, RTK and LiDAR, unifying the algorithm framework, and automatic annotation, it has lowered the costs of its intelligent driving solutions based on PETR series sparse algorithms by 20%-30%, compared with conventional solutions on the market.

2. 4D algorithms offer higher accuracy and make intelligent driving more reliable.

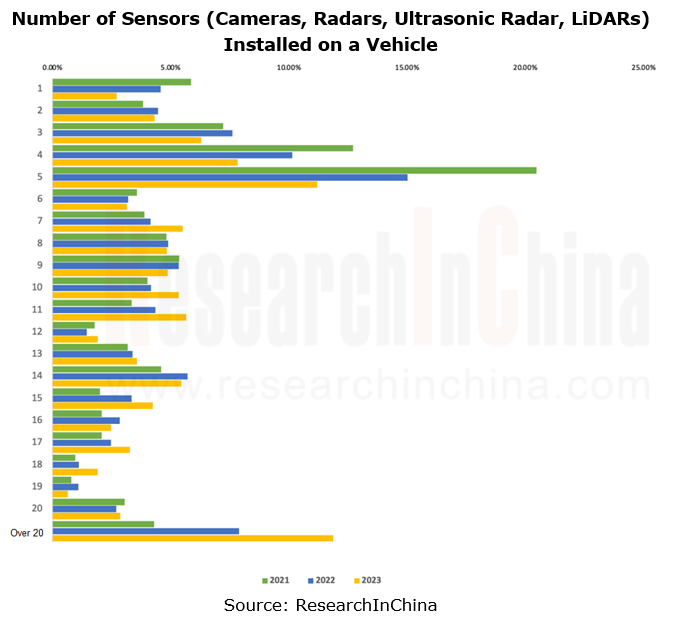

As seen from the sensor configurations of OEMs, in recent three years ever more sensors have been installed, with increasing intelligent driving functions and application scenarios. Most urban NOA solutions are equipped with 10-12 cameras, 3-5 radars, 12 ultrasonic radars and 1-3 LiDARs.?

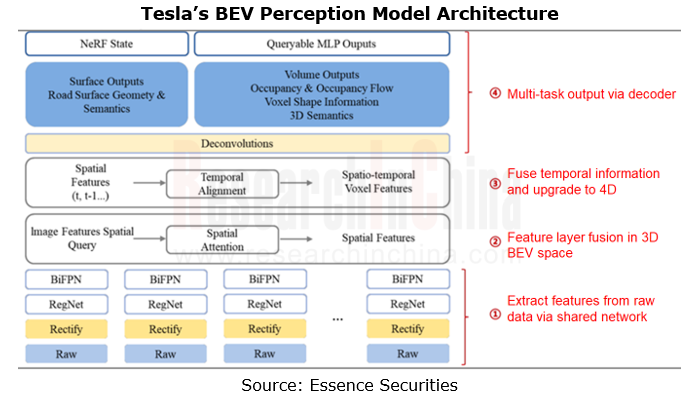

With the increasing number of sensors, ever more perception data are generated. How to improve the utilization of the data is also placed on the agenda of OEMs and algorithm providers. Although the algorithm details of companies are a little different, the general ideas of the current mainstream BEV Transformer solutions are basically the same: conversion from 2D to 3D and then to 4D.

Temporal fusion can greatly improve the algorithm continuity, and the memory of obstacles can handle occlusion and allows for better perception the speed information. The memory of road signs can improve the driving safety and the accuracy of vehicle behavior prediction. The fusion of information from historical frames can improve the perception accuracy of the current object, while the fusion of information from future frames can verify the object perception accuracy, thereby enhancing the algorithm reliability and accuracy.

?

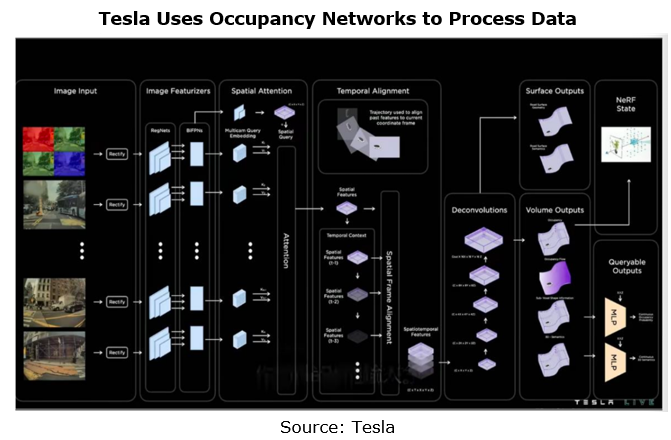

Tesla's Occupancy Network algorithm is a typical 4D algorithm.

Tesla adds the height information to the vector space of 2D BEV+ temporal information output by the original Transformer algorithm to build the 4D space representation form of 3D BEV + temporal information. The network runs every 10ms on the FSD, that is, it runs at 100FPS, which greatly improves the speed of model detection.?

3. End-to-end algorithms integrating perception, planning and control enable more anthropomorphic intelligent driving.

Mainstream intelligent driving algorithms have adopted the “BEV+Transformer” architecture, and many innovative perception algorithms have emerged. However, rule-based algorithms still prevail among planning and control algorithms. Some OEMs face technical and practical challenges in both perception and planning & control systems, which are sometimes in a "split" state. In some complex scenarios, the perception module may fail to accurately recognize or understand the environmental information, and the decision module may make incorrect driving decisions due to improper handling of the perception results or algorithm limitations. This restricts the development of advanced intelligent driving to some extent.?

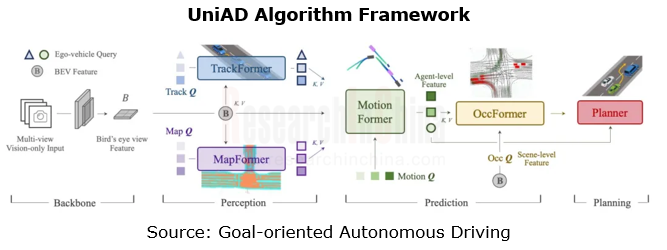

UniAD, an end-to-end intelligent driving algorithm jointly released by SenseTime, OpenDriveLab and Horizon Robotics, was rated as the Best Paper in CVPR2023. UniAD integrates three main tasks (perception, prediction and planning) and six sub-tasks (target detection, target tracking, scene mapping, trajectory prediction, grid prediction and path planning) into a unified end-to-end network framework based on Transformer for the first time to attain a general model of full-stack task-critical driving. Under the nuScenes real scene dataset, UniAD performs all tasks best in the field, especially in terms of the prediction and planning results far better the previous best solution.?????

The basic end-to-end algorithm enables direct inputs from sensors and predictive control outputs, but it is difficult to optimize, because of lacking effective feature communication between network modules and effective interaction between tasks and needing to output results in phases. The decision-oriented perception and decision integrated design proposed by the UniAD algorithm uses token features for deep fusion according to the perception-prediction-decision process, so that the indicators of all tasks targeting decision are consistently improved.??

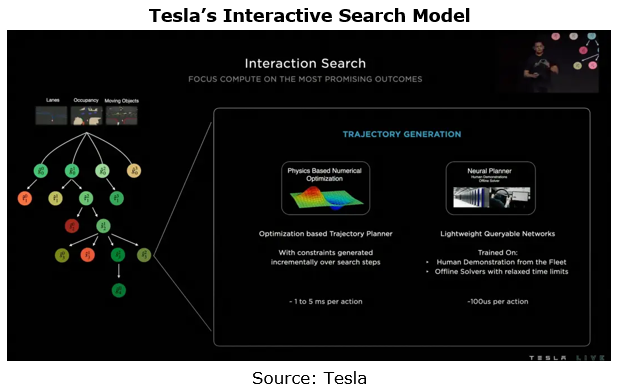

In terms of planning and control algorithms, Tesla adopts an approach of interactive search + evaluation model to enable a comfortable and effective algorithm that combines conventional search algorithms with artificial intelligence:

Firstly, candidate objects are obtained according to lane lines, occupancy networks and obstacles, and then decision trees and candidate object sequences are generated.

The trajectory for reaching the above objects is constructed synchronously using conventional search and neural networks;

The interaction between the vehicle and other participants in the scene is predicted to form a new trajectory. After multiple evaluations, the final trajectory is selected. During the trajectory generation, Tesla applies conventional search algorithms and neural networks, and then scores the generated trajectory according to collision check, comfort analysis, the possibility of the driver taking over and the similarity with people, to finally decide the implementation strategy.??

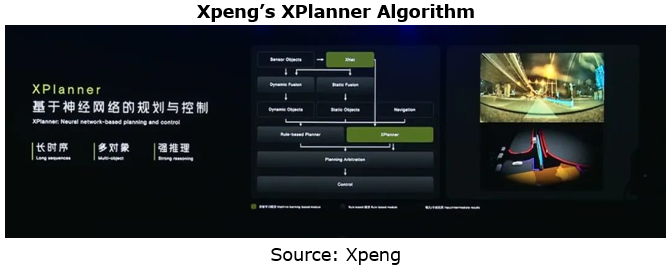

XBrain, the ultimate architecture of Xpeng’s all-scenario intelligent driving, is composed of XNet 2.0, a deep vision neural network, and XPlanner, a planning and control module based on a neural network. XPlanner is a planning and control algorithm based on a neural network, with the following features:

Rule algorithm

Long time sequence (minute-level)

Multi-object (multi-agent decision, gaming capability)

Strong reasoning

The previous advanced algorithms and ADAS functional architectures were separated and consisted of many small logic planning and control algorithms for sub-scenes, while XPlanner has a unified planning and control algorithm architecture. XPlanner is supported by a foundation model and a large number of extreme driving scenes for simulation training, thus ensuring that it can cope with various complex situations.

Automotive EMS and ECU Industry Report, 2025

Research on automotive EMS: Analysis on the incremental logic of more than 40 types of automotive ECUs and EMS market segments

In this report, we divide automotive ECUs into five major categories (in...

Automotive Intelligent Cockpit SoC Research Report, 2025

Cockpit SoC research: The localization rate exceeds 10%, and AI-oriented cockpit SoC will become the mainstream in the next 2-3 years

In the Chinese automotive intelligent cockpit SoC market, althoug...

Auto Shanghai 2025 Summary Report

The post-show summary report of 2025 Shanghai Auto Show, which mainly includes three parts: the exhibition introduction, OEM, and suppliers. Among them, OEM includes the introduction of models a...

Automotive Operating System and AIOS Integration Research Report, 2025

Research on automotive AI operating system (AIOS): from AI application and AI-driven to AI-native

Automotive Operating System and AIOS Integration Research Report, 2025, released by ResearchInChina, ...

Software-Defined Vehicles in 2025: OEM Software Development and Supply Chain Deployment Strategy Research Report

SDV Research: OEM software development and supply chain deployment strategies from 48 dimensions

The overall framework of software-defined vehicles: (1) Application software layer: cockpit software, ...

Research Report on Automotive Memory Chip Industry and Its Impact on Foundation Models, 2025

Research on automotive memory chips: driven by foundation models, performance requirements and costs of automotive memory chips are greatly improved.

From 2D+CNN small models to BEV+Transformer found...

48V Low-voltage Power Distribution Network (PDN) Architecture and Supply Chain Panorama Research Report, 2025

For a long time, the 48V low-voltage PDN architecture has been dominated by 48V mild hybrids. The electrical topology of 48V mild hybrids is relatively outdated, and Chinese OEMs have not given it suf...

Research Report on Overseas Cockpit Configuration and Supply Chain of Key Models, 2025

Overseas Cockpit Research: Tariffs stir up the global automotive market, and intelligent cockpits promote automobile exports

ResearchInChina has released the Research Report on Overseas Cockpit Co...

Automotive Display, Center Console and Cluster Industry Report, 2025

In addition to cockpit interaction, automotive display is another important carrier of the intelligent cockpit. In recent years, the intelligence level of cockpits has continued to improve, and automo...

Vehicle Functional Safety and Safety Of The Intended Functionality (SOTIF) Research Report, 2025

Functional safety research: under the "equal rights for intelligent driving", safety of the intended functionality (SOTIF) design is crucial

As Chinese new energy vehicle manufacturers propose "Equal...

Chinese OEMs’ AI-Defined Vehicle Strategy Research Report, 2025

AI-Defined Vehicle Report: How AI Reshapes Vehicle Intelligence?

Chinese OEMs’ AI-Defined Vehicle Strategy Research Report, 2025, released by ResearchInChina, studies, analyzes, and summarizes the c...

Automotive Digital Key (UWB, NearLink, and BLE 6.0) Industry Trend Report, 2025

Digital key research: which will dominate digital keys, growing UWB, emerging NearLink or promising Bluetooth 6.0?ResearchInChina has analyzed and predicted the digital key market, communication techn...

Integrated Battery (CTP, CTB, CTC, and CTV) and Battery Innovation Technology Report, 2025

Power battery research: 17 vehicle models use integrated batteries, and 34 battery innovation technologies are released

ResearchInChina released Integrated Battery (CTP, CTB, CTC, and CTV)and Battery...

AI/AR Glasses Industry Research Report, 2025

ResearchInChina released the " AI/AR Glasses Industry Research Report, 2025", which deeply explores the field of AI smart glasses, sorts out product R&D and ecological layout of leading domestic a...

Global and China Passenger Car T-Box Market Report 2025

T-Box Research: T-Box will achieve functional upgrades given the demand from CVIS and end-to-end autonomous driving

ResearchInChina released the "Global and China Passenger Car T-Box Market Report 20...

Automotive Microcontroller Unit (MCU) Industry Report, 2025

Research on automotive MCUs: the independent, controllable supply chain for automotive MCUs is rapidly maturing

Mid-to-high-end MCUs for intelligent vehicle control are a key focus of domestic produc...

Automotive LiDAR Industry Report, 2024-2025

In early 2025, BYD's "Eye of God" Intelligent Driving and Changan Automobile's Tianshu Intelligent Driving sparked a wave of mass intelligent driving, making the democratization of intelligent driving...

Software-Defined Vehicles in 2025: SOA and Middleware Industry Research Report

Research on automotive SOA and middleware: Development towards global SOA, cross-domain communication middleware, AI middleware, etc.

With the implementation of centrally integrated EEAs, OEM softwar...